Google Site Issues

I recently discovered that this site has more problems than I knew about.

I try to keep this site up to date and running smoothly. I think I do a pretty good job with it. I keep the software up to date, I monitor the log files for issues, and in general I’m pretty pleased with how it all runs.

I thought there weren’t any big problems at all. But then I found a bunch of them, on Google.

I don’t use any Google trackers on this site. There’s no ads of any kind. I avoid online ads and trackers, and I think it would be hypocritical of me to use those tools on my own web site. But, I do use Google’s Search Console. The Search Console keeps a record of where my site lands in the google page ranks (hint: not high), and I can see the new pages I add get slowly added to Google web index as its bots crawl my site.

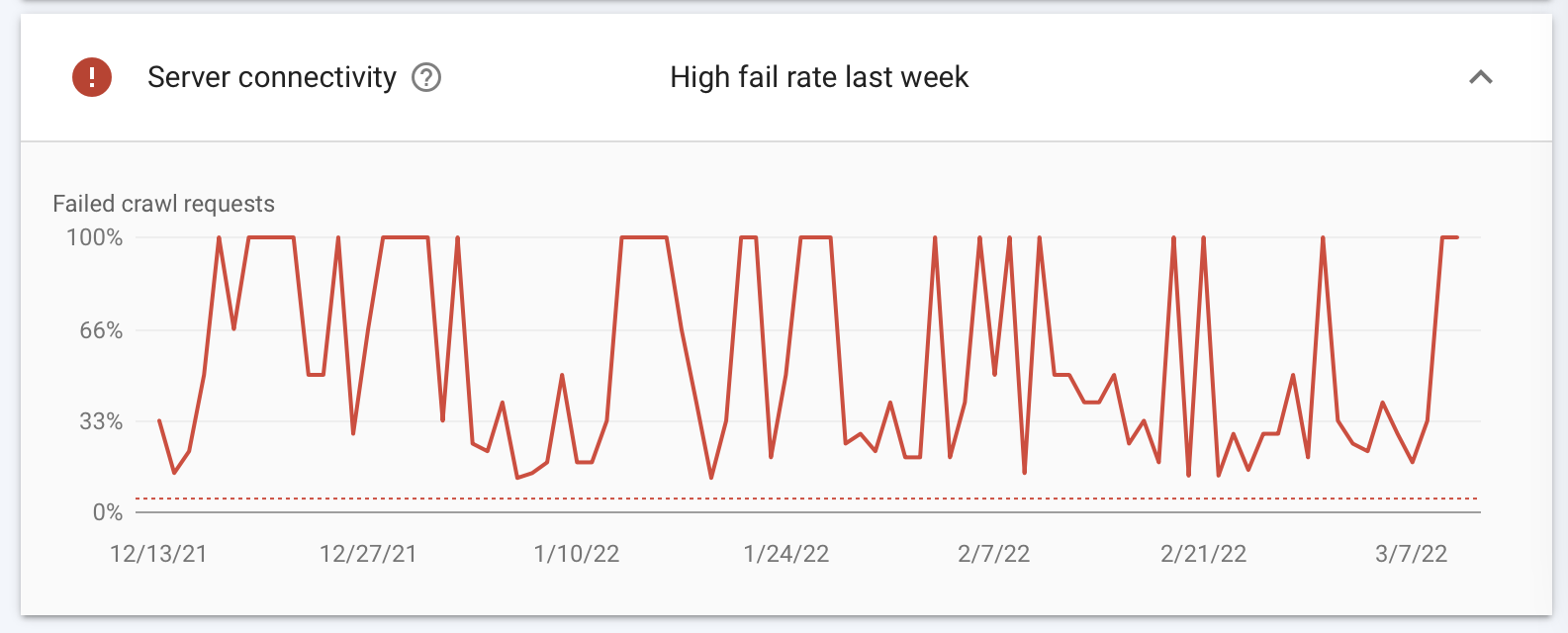

Everything looked fine. Until I was clicking through the site one day and found this.

What the heck?

First of all, this critical error report is buried in their interface. It takes four or five clicks to get there, which is why I had never

seen it before. You have to go through Settings > Crawl Stats > site.name > Host status. And there’s nothing about this on the

summary home page. I had no reason to suspect that these errors were happening.

There aren’t any errors in my web logs either. With all these “failed crawl requests” you would think that they would be reflected somewhere in the server logs, but no, everything seems fine there.

So what is it? My best guess is that Google is complaining about my page load times. It’s the main site, reiterate.app and not the blog subdomain (which is statically generated and is quite fast). When I first built the main site, I used a free template that I got from… somewhere. And it uses jQuery to do a couple small animations. But that means I’m serving a giant jQuery javascript file with every page, and that’s not ideal.

I need to get rid of that. Now that Rails 7 is out and seems stable, I should upgrade from Rails 6. I want to examine the new technologies there. I can replace webpacker with jsbundling and that should save a lot. Then I can redo all the css, and hopefully get rid of jQuery while I’m at it. I’ve never really looked at optimizing the site for load times, so there’s probably a lot of room for improvement.

And I hope that will fix the Google errors. Because, there’s no explanation from Google about what’s really causing that. I’m just guessing.